MV-Fashion: Towards Enabling Virtual Try-On and Size Estimation with Multi-View Paired Data

Nov 16, 2025·, ,,,,·

0 min read

,,,,·

0 min read

Hunor Laczkó

Libang Jia

Phat Truong

Diego Hernández

Sergio Escalera

Jordi Gonzàlez

Meysam Madadi

Abstract

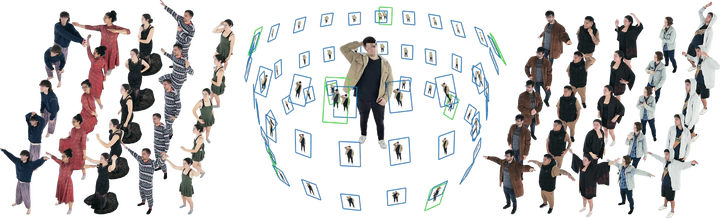

Existing 4D human datasets fall short for fashion-specific research, lacking either realistic garment dynamics or task-specific annotations. Synthetic datasets suffer from a realism gap, whereas real-world captures lack the detailed annotations and paired data required for virtual try-on (VTON) and size estimation tasks. To bridge this gap, we introduce MV-Fashion, a large-scale, multi-view video dataset engineered for domain-specific fashion analysis. MV-Fashion features 3,273 sequences (72.5 million frames) from 80 diverse subjects wearing 3-10 outfits each. It is designed to capture complex, real-world garment dynamics, including multiple layers and varied styling (e.g., tucked shirts, rolled sleeves). A core contribution is a rich data representation that includes pixel-level semantic annotations, ground-truth material properties like elasticity, and 3D point clouds. Crucially for VTON applications, MV-Fashion provides paired data: multi-view synchronized captures of worn garments alongside their corresponding flat, catalogue images. We leverage this dataset to establish baselines for fashion-centric tasks, including virtual try-on, clothing size estimation, and novel view synthesis.

Type

Publication

In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2026 (Highlight)